|

|

| We provide a sketch recommendation system which helps the user to draw and interactively generate realistic images. |

|

Oliver Wang2 Alexei A. Efros2,3 Philip H.S. Torr1 Eli Shechtman2 1 University of Oxford 2 Adobe Research 3 UC Berkeley In ICCV 2019 |

|

|

|

|

|

| We provide a sketch recommendation system which helps the user to draw and interactively generate realistic images. |

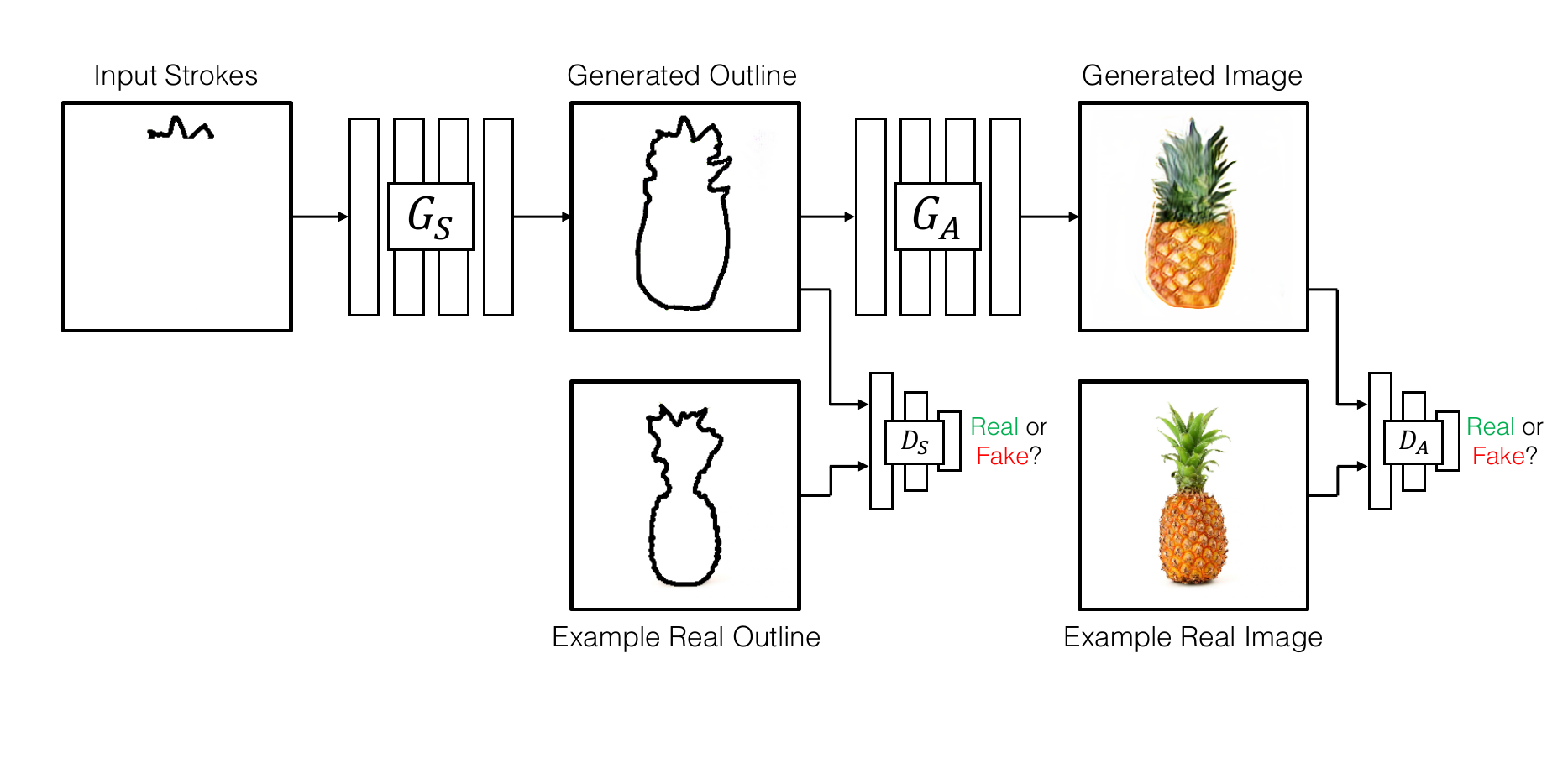

| We propose an interactive GAN-based sketch-to-image translation method that helps novice users easily create images of simple objects. The user starts with a sparse sketch and a desired object category, and the network then recommends its plausible completion(s) and shows a corresponding synthesized image. This enables a feedback loop, where the user can edit the sketch based on the network's recommendations, while the network is able to better synthesize the image that the user might have in mind. In order to use a single model for a wide array of object classes, we introduce a gating-based approach for class conditioning, which allows us to generate distinct classes without feature mixing, from a single generator network. |

|

| The user makes an input stroke, and the shape generator network gives multiple shape completions based on the selected class. The appearance generator takes one of the shape completions and generates a realistic image from it. |

|

A.Ghosh, R. Zhang, P. Dokania, O. Wang, A. Efros, P. Torr, E. Shechtman Interactive Sketch & Fill: Multiclass Sketch-to-Image Translation In ICCV, 2019. (hosted on ArXiv) |

Acknowledgements |